Introduction

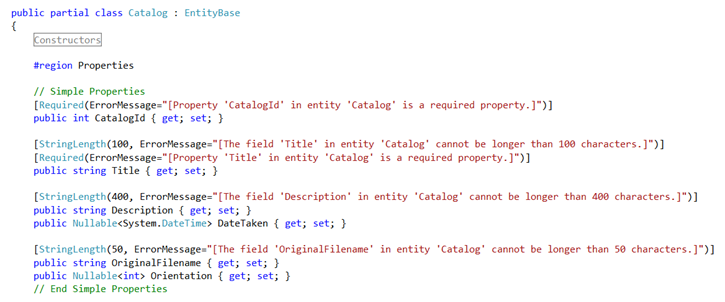

When we left off in Part 1, we had edited the T4 template which generates Entity Framework POCO entities and had it add appropriate attributes to assist in data validation checks.

The next step is to show you how we can make use of these annotations.

Note: the data access code has been updated since this article was first published. Refer to this article for the latest code.

Decorated Entities

At the end of Part 1, we had managed (hopefully) to generate classes with data annotations similar to the following:

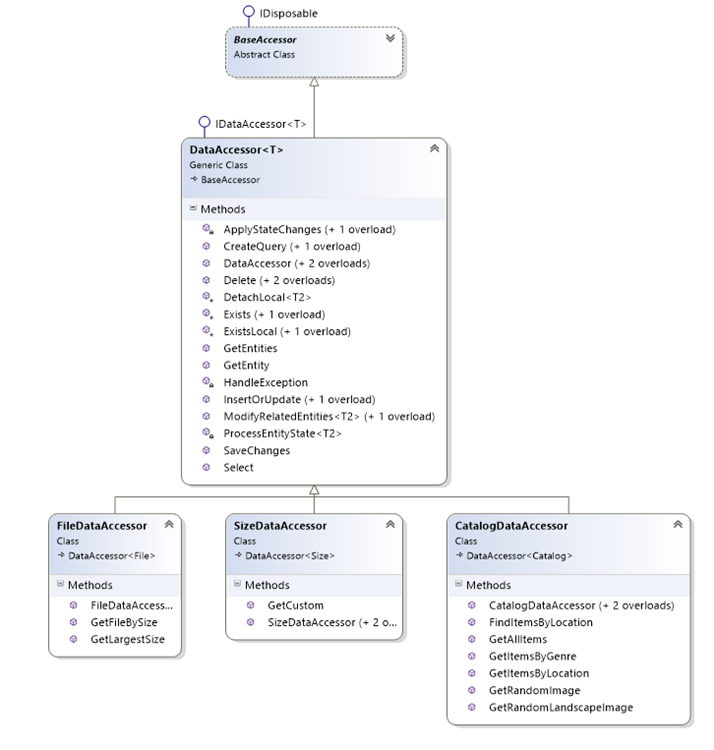

So now that this exists, perhaps it is time to revisit the data access approach I introduced in the ‘Flexible Data Operations’ article. My data access approach consolidates most of the essential “CRUD” (create, read, update, delete) type data access functionality – but more importantly – protects the Entity Framework DbContext from usage outside the parent assembly.

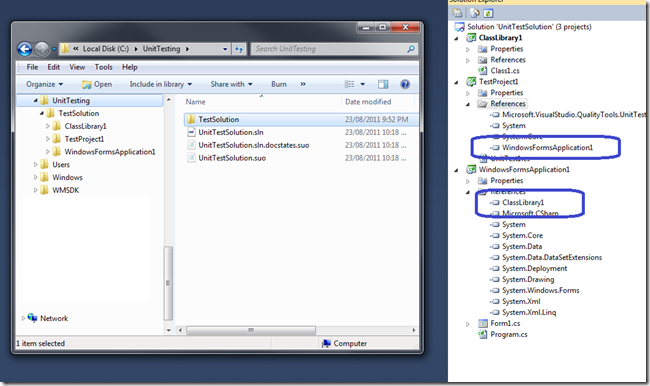

Here’s a view of the appropriate data access classes:

The majority of the bulk of the work is performed by the DataAccessor<T> class which can be instantiated directly by supplying a generic type, or can be invoked by type-specific data accessors which provide some query re-use.

One of the main attractions of this design is uniformity – core operations are performed the same way regardless of scope or context. Therefore, implementing a data validation regimen is pretty straightforward.

Data Validation at Runtime

If you were to manually validate an object, the following code would suffice (providing the object has annotations, of course):

// using System.ComponentModel.DataAnnotations; var results = new List<ValidationResult>(); if (!Validator.TryValidateObject(item,

new ValidationContext(item, null, null), results, true)) { }

With this approach it’s actually quite straightforward to include validation – the current design processes object state changes when an entity (or a collection of entities) is added or updated. Within the Data Accessor class, I simply needed to include the following logic:

private void ApplyStateChanges<T2>(params T2[] items) where T2: EntityBase { var results = new List<ValidationResult>(); bool isValid = true; foreach (T2 item in items) { if (!Validator.TryValidateObject(item,

new ValidationContext(item, null, null), results, true)) { isValid = false; } } if (!isValid) { string errorMessages = results.Select(result => result.

ErrorMessage).Aggregate((a, b) => a + ", " + b);

throw new ValidationException(String.Format

("Can not apply current changes,

validation has failed for one or more entities: {0}",

errorMessages)); } //else continue }

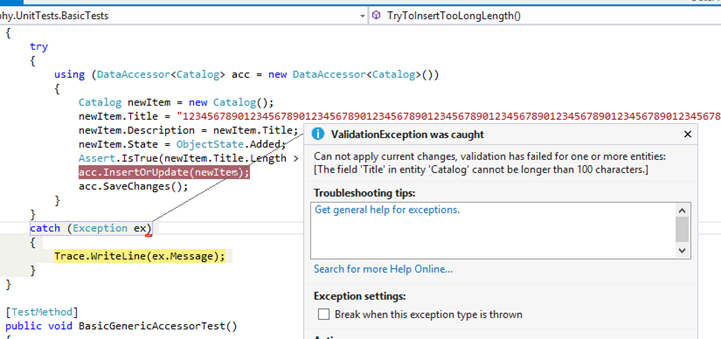

Testing the Validation

To test out the validation, I’ve written a basic unit test which tries to add a new item with a Title field which will be too long (101 characters), and therefore should fail validation during the InsertOrUpdate call:

[TestMethod] [ExpectedException(typeof(ValidationException), "Should throw a

ValidationException")] public void TryToInsertTooLongLength() { using (DataAccessor<Catalog> acc = new DataAccessor<Catalog>()) { Catalog newItem = new Catalog(); newItem.Title = "1234567890123456789012345678901234567890

1234567890123456789012345678901234567890123456789012345678901"; newItem.Description = newItem.Title; newItem.State = ObjectState.Added; Assert.IsTrue(newItem.Title.Length > 100); acc.InsertOrUpdate(newItem); acc.SaveChanges(); } }

Result..

As I mentioned in Part 1, this is really a preventative measure (a last resort of sorts), so it isn’t intended to be an interactive approach. What it does provide though are two very useful features:

- Runtime validation for really obvious problems that will fail if you try to commit the changes,

- Decent level of information for logging purposes, so you can easily discover why a change was rejected

Notice that I modified the message to be more descriptive about which entity the validation failed on, and on which property – and why.

Summary

Well, we’re getting nearer to a fully articulated data access approach. In fact, I’ve already put a working solution “into production” by launching a website which uses all the implementation I’ve covered in the past couple of weeks.

Well, we’re getting nearer to a fully articulated data access approach. In fact, I’ve already put a working solution “into production” by launching a website which uses all the implementation I’ve covered in the past couple of weeks.

You can check it out right now – http://www.rs-photography.org.

The site is hosted on Windows Azure, and the data is stored in SQL Azure. I’ll be documenting what the process was like when publishing the site and data to Windows Azure, and some handy tips and tricks.

I’ll also be writing a few more articles explaining some of the functional benefits of what I’ve built to date next week, including an updated sample solution.

Bonus Stuff

Came across this handy roadmap for Windows Phone 8 development.