If you’ve spent any amount of time in the IT industry – and particularly in software development – you’ve probably been asked to forecast the effort associated with some kind of work, outcome or deliverable.

Software development is tricky as it involves some degree of guess work – requirements can often be vague or ill-defined; the solution(s) may not be clear or obvious, you you may be dealing with too many unknowns.

There are two main ways to estimate project/effort costs – “top down estimating” and “bottom-up estimating”. I’ve a lot of experience at both, but more so with bottom-up estimation. Bottom-up estimation establishes effort forecasts based on the breakdown of work to be undertaken, with numbers given to granular units of work, which are then combined to form a ‘total effort’ estimate.

As someone with a long history in software development (15+ years), I’ve become more comfortable working with tasks and specifically defined units of work – particularly since they tend to lend themselves to being applied to solution design in a more meaningful way.

When I worked at Microsoft from 2005 to 2007, I was introduced to a very handy methodology to develop bottom-up costing and effort estimations. This methodology involved the application of handwritten utilities, such as Excel and Visio which combined spread sheet calculations with a visual approach (called ‘feature maps’) to help guide clients in understanding their own requirements.

Using this methodology as a starting point, I’ve developed my own simple estimation tool which takes a more simplified view of the more complex tools I’ve used in the past. To understand how to leverage this utility, I need to explain the concepts which underpin the numbers.

The Estimation Spread sheet

The spread sheet is split into a number of work sheets. Each sheet contains information and values pertinent to the calculation of estimated effort (in single person hours/weeks/years).

The idea is to produce a raw gross effort estimate, which can be divided by the number and type of resources. This would compliment an MS Project style project plan or help to underpin effort estimates against tasks in Team Foundation Server, for example.

The work sheets are as follows:

| Work Sheet | Usage |

| Deliverables |

Assigns effort to outcomes based on discipline responsible for primary work |

| Effort Calculations | Shows work breakdown (read only) |

| Complexity | Allows the calibration of the distribution of effort and the number of hours per complexity |

| Requirement Mapping | Assigns complexity estimates to requirements |

Essentially, you just need to configure the Complexity page first, then assign complexity estimates to requirements. This will produce all the effort calculations. Sounds easy, right?

Step 1: Configure the effort complexities

The idea behind my own approach is to define a set of “complexities” which experienced subject matter experts (SMEs) can apply to units of work. This isn’t rocket science, the definitions and their application to requirements is based on the discretion (and experience) of those participating in the forecasting exercise.

Conceptually, the idea is to define a range of values and balance them against the type of work which would need to be undertaken to finish a unit of work. These definitions are defined in the spread sheet on the Complexity work sheet:

The idea here is that for each unit of work, the effort will be split across a number of disciplines, i.e. “Design”, “build”, “Test” and “Document”. The weighting affects how the total effort for a complexity will be split between these disciplines. To make this clearer, here are my rough definitions for each discipline:

| Discipline | Definition |

| Design | Plan, design and document a solution for a requirement/feature/implementation – including solution design and any related artefacts |

| Build | The task of actually building or supporting a unit of work, including unit testing and documentation |

| Test | The task of fully testing the unit of work, could be automated/manual or include system integration testing |

| Document | This is strictly intended to capture effort for end user documentation, user guides/administration and build notes/release notes etc. |

Note that many different team members could play a role in each of these disciplines. In some cases, a discipline may not even apply – for example something estimated as “Easy” may have no “Document” effort, as it would either not require explicit documentation, or could be potentially covered by a parent task/unit of work.

The important numbers on this tab are the “Total Hours” and the weight distribution between the disciplines. Toggle these values until you get definitions for each complexity that you’re comfortable with.

Step 2: Assign complexity to requirements

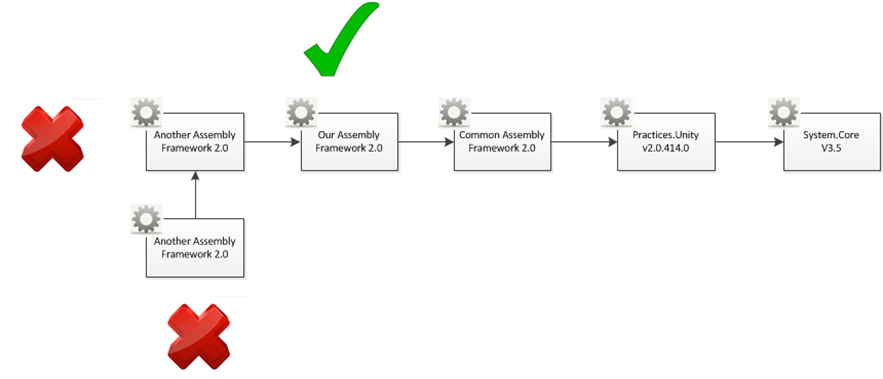

The idea behind the requirement mapping and effort estimation worksheet is to allow subject matter experts (typically a team of people who would perform the work indicated) can assign an estimated complexity rating to units of work.

Complexity is assigned to areas of design and implementation:

| Area | Definition |

| UI (User Interface) | Any work involving the development, documentation of or delivery of applications or programs which users will use to interact with a solution. May include workflow, validation rules etc. |

| Interface/Integration | Any interface which is defined between systems or applications, or between tiers. May include workflow, validation rules, system integration etc.. |

| Schema/DB | This represents the definition of data or data storage which may be required, including supporting tasks like ETL. May include reporting, maintenance etc.. |

This also allows for traceability from requirements (functional/non-functional) to effort, and can help the team define logical packages of work. The complexity estimates are just so – an estimate provided by a person (or people) who have a background in developing and delivering work stated in the requirements.

What this does is effectively assign gross averages of hours against units of work. The definition of the complexities can be modified to suit the experience/expertise and performance of the team or people who might actually perform the work.

In this example, if parallel (peer) tasks are related and the team feels comfortable, a complexity rating can be assigned to the parent task/requirement instead of having to specify a complexity for each unit of work – or indeed, even for each part of a solution.

The idea is to continually review the tasks and complexity estimates (about three or four full passes) and to complete a few tweaks to the complexity breakdown before you’ll arrive at numbers which might look halfway accurate. Remember, this is quite flexible, but the idea is to try and account for all the effort.

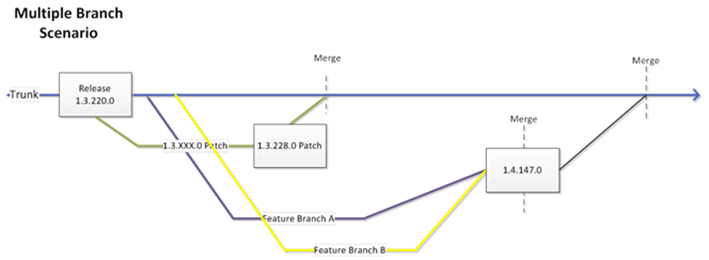

This approach lets you take work “in and out of scope” by simply not assigning a complexity to a task or requirement. That way you can come up with deltas which can be used in negotiations (e.g. X days effort without requirements Y and Z).

Result: Outcomes

Once you have defined the work, set complexity estimates, the spread sheet will start to produce some numbers for you. This information does not need to be manually tweaked (consider it read-only).

The Effort Calculations work sheet shows you how the numbers are calculated. Essentially, the idea is to count up the number of rows which feature a complexity rating, and then multiply the number by the total hours which has been set for each complexity.

This also allows for a calculation of the total effort by discipline, e.g. total number of hours for design, development (build) and so on.

Note that the numbers calculated are gross hours, and do not factor in the size of a team, etc. It’s up to you to make a determination from the raw total (gross) hours, based on the size and makeup of your team.

Result: Deliverables

If you have a list of high level deliverables which you need to provide indicative effort forecasts for, you can use the total effort numbers (by discipline) to derive some halfway decent estimates. The key is to assign each deliverable as a percentage of the total effort from one discipline (typically, the discipline which would be most responsible for delivery of the item).

Summary

This is obviously a very simple spread sheet, and the calculations made aren’t in any way close to being hyper-accurate. The idea is that this provides a team with a handy mechanism to document and trace effort against requirements, and provide a bit of a framework for distributing effort to project tasks (like documentation, testing etc.) across the total effort.

It’s by no means a comprehensive tool, and it doesn’t factor in a number of different considerations, e.g. the skills and discrete role definitions on a delivery team, whether the project is Greenfields (new development) and also doesn’t distinctly include contingency/buffer.

I hope that this provides you with some inspiration or at least some food for thought the next time you need to build some effort estimations. All feedback welcomed!

Oh – and Happy New Year – 2014.

R

7 thoughts on “A simple project effort estimation utility”

Appreciated, the way you have specify Estimation Efforts, Rob Sanders!

This was really helpful. Thank you! I was working on my own xls on complexity and so happy when I found yours.

I do have 1 question – do you ever use PERT 3 point technique to do estimations? If yes, how would you compare it to bottom up or top down?

Again – Thank you!

Re: Three-point estimation

Generally, no, I’ve had more success using high/low boundaries (best case, worst case) and task/WBS priority/weighting. If you mix this with standard project management metrics and variables (skills/skill types, availability plus contingencies) you can get a reasonably good estimate. The hard part is trying to factor in tasks which carry complete unknowns – in these cases, have broken them into “special cases” and tried to apply task breakdown across the piece of work, i.e. in acquiring a black box piece of technology, factoring in governance costs, training etc.

Hi,

I really appreciate the way you have specify and explain how I should perform Estimation Efforts for the new project. This guidance will help me a lot in my first project where I need to do this kind of work. If it’s not too much to ask, can you send me a sample of The Estimation Spread.

Sure, there’s a link at the bottom of the article to a copy of the Excel workbook. I tested it last month, it’s still working.

On the requirements mapping tab, where do you derive the column b values?

Those values are reserved for linking to an external system (e.g.VSTS, DOORS, MS Project), the field is unconstrained.